Statistical tools for valid causal inference with fewer assumptions

October 25, 2020

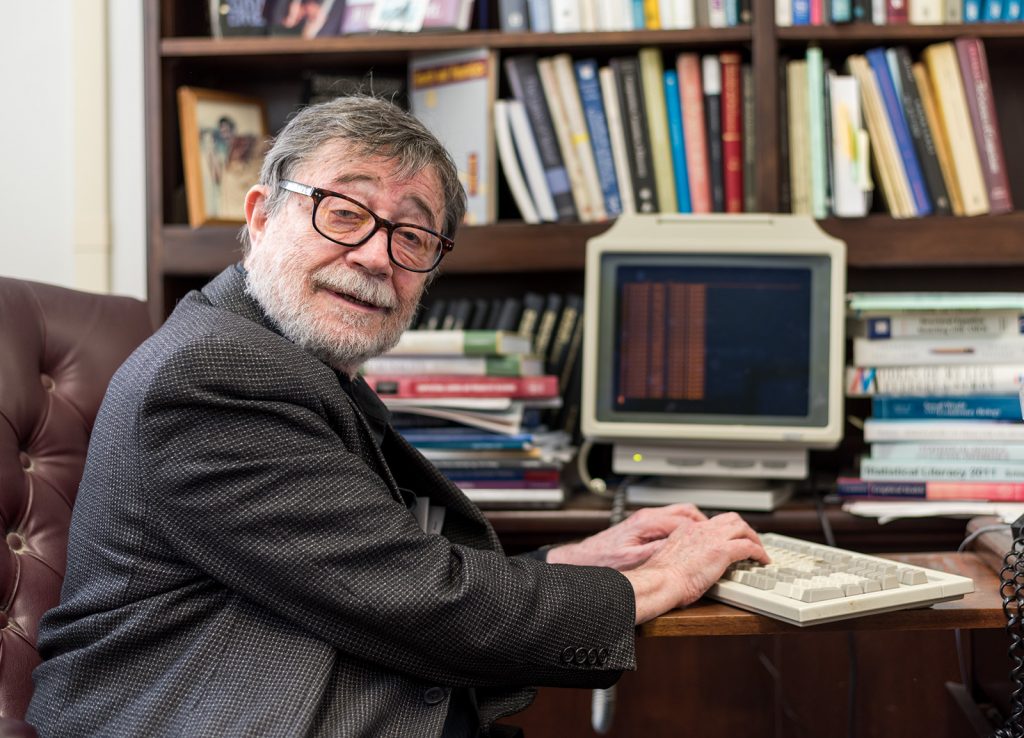

Judea Pearl, an emeritus professor of computer science at UCLA and pioneer in the field of artificial intelligence, checks his email on a 20-year-old Wyse terminal. (Joe Akira/Daily Bruin)

Judea Pearl’s fourth-grade teacher and classmates insisted he was wrong. They were convinced the area of a kilometer-length square was a thousand square meters, not a million like Pearl said.

Pearl was unphased: He thought his teacher had made a mistake. Two days later, she apologized.

“That was when I first started questioning authority,” he said, chuckling.

Pearl, an emeritus professor of computer science at UCLA, built his career by challenging generally accepted conventions in the field of artificial intelligence.

When people upgraded to newer computers, Pearl continued to use his 20-year-old Wyse terminal.

“I always have my internet when everyone else is complaining about it,” he said while typing code onto the green and black screen to load his email.

When computer scientists said computers could only understand true or false, Pearl found a way to make them understand uncertainty.

When computer scientists said machines should be answering the question, “What?” Pearl said they should be answering the question, “Why?”

“He understands people aren’t necessarily going to agree with him, but when (Pearl) explains his logic, it all just seems so obvious,” said Kaoru Mulvihill, Pearl’s office assistant.

Mulvihill puttered around Pearl’s office, dusting first edition Francis Bacon books and the leather chair in the corner that Pearl sleeps in to maintain his strict nighttime working hours.

The walls are decorated with honorary degrees from universities that he can’t keep track of. Yellowed pages of scientific manuscripts are neatly slotted in bins around his desk, and trophies cover any flat surface that isn’t occupied by a stack of books.

The A.M. Turing Award, the most prestigious prize awarded to computer scientists by the Association for Computing Machinery, secures its spot in the center of Pearl’s bookcase.

Pearl won the award in 2011 for his probabilistic model of artificial intelligence.

In the late ’70s, before Pearl’s model, scientists tried to train computers to diagnose diseases using rule-based expert systems. They conducted hours of interviews with doctors and extrapolated a set of rules for the computer to follow.

However, the system had many problems. It took a long time to write the rules and if one rule changed, the programmers had to rewrite the entire code. Most importantly, the machine failed to grasp a concept central to almost every human decision: uncertainty.

Doctors are rarely certain in their initial diagnosis when treating a patient, Pearl said. He added they deduce a diagnosis based on what they believe is the most likely explanation for the set of symptoms.

“When you have uncertainty, and you always have uncertainty … rules aren’t enough,” Pearl said. “The solution was probability.”

Pearl devised a mathematical model called a Bayesian network that allowed machines to factor uncertainty into their decision-making processes.

Machines could now look at a set of symptoms and consider the same probabilities that a doctor would, rather than blindly following a set of rules.

Pearl said that not all current algorithms use Bayesian networks, but most do.

Email spam folders use Bayesian networks to calculate the probability that an incoming email is spam. Scientists can use Bayesian networks to determine the most probable winner of a football game. Even Siri, the Apple virtual assistant, uses Bayesian networks to determine the most likely interpretation of a user’s command.

“(Siri) still can never understand my accent, though,” Pearl said.

However, since the introduction of Bayesian networks, the field of artificial intelligence has struggled to overcome another obstacle, Pearl said. Most computer scientists have successfully applied artificial intelligence to problems with uncertainty but have failed to push machines to understand cause and effect, he added.

He said this is largely because there has not been a mathematical language for causality until recently.

“Even if I told you what causes what, you could not put it down on paper,” he said.

So, Pearl created a new language.

It allowed scientists to both articulate causal questions and answer them. As detailed in Pearl’s “The Book of Why: The New Science of Cause and Effect,” using the language, machines could answer questions such as, “Should I quit my job?,” “Did the new advertising cause an increase in sales?” and “What if I had not smoked for the past two years?”

Pearl said this language has led to a causal revolution in multiple fields: Climatologists use the language to determine if a natural disaster is caused by climate change. Lawyers and employers use the language to determine if pay differences are caused by gender discrimination.

Professors have been slow to adopt the new mathematical language, he said. In the classroom, they still teach statistics centered around correlation instead of causation. Pearl said the resistance to change doesn’t surprise him.

“People go to war over a change in language,” Pearl said. “For (professors), it’s a trauma, it’s a paradigm shift, it’s heartbreaking.”

It is hard for scientists to abandon a language they have based their entire careers on, he said. That’s why he wrote his newest book on causality to appeal to students, not professors, Pearl said.

“When people change their minds in science, it’s not because (scientists) see the light in your theory, it’s because they simply die and their students see the light,” Pearl said.

Mark Handcock, the chair of the statistics department at UCLA, said that the statistics department is starting to develop graduate level courses that will teach the causal language and will begin developing undergraduate level courses after launching the graduate courses.

“It will take time for these ideas to be part of the undergraduate courses,” he said. “We will, however, get there.”

Pearl said he hopes students will read his book and demand that their statistics professors integrate causal reasoning into their class.

“I want every student to rebel with confidence and a smile,” he said.

AI World Society - Powered by BGF